The Hook

Picture a classroom in 2024. Two students sit side by side. They open identical documents — same words, same paragraphs, same explanations of photosynthesis or the French Revolution. One student was told a teacher wrote it. The other was told an AI did.

Same content. Same font. Same everything.

They take the same test afterward. And the student who read the "AI version" scores lower.

No one changed a single word. The label did the damage.

This is not a hypothetical. Researchers studying AI-assisted learning have found patterns that should give every EdTech executive pause: when students believe material came from an AI, their engagement with it measurably changes. Multiple lines of research suggest that perceived source credibility shapes how deeply students process information — and AI authorship, for now, carries a credibility penalty. The content may be identical. The outcome is not.

Someone made a decision that created this problem. And that decision wasn't made by a student.

The Deep Dive

Here's what the research actually found. When students took notes with AI assistance, they rated the tool as helpful. They felt good about it. But when their learning outcomes were measured — real retention, real recall — the AI-assisted group underperformed. Perceived helpfulness and actual learning moved in opposite directions.

That gap has a name in cognitive psychology: desirable difficulty.

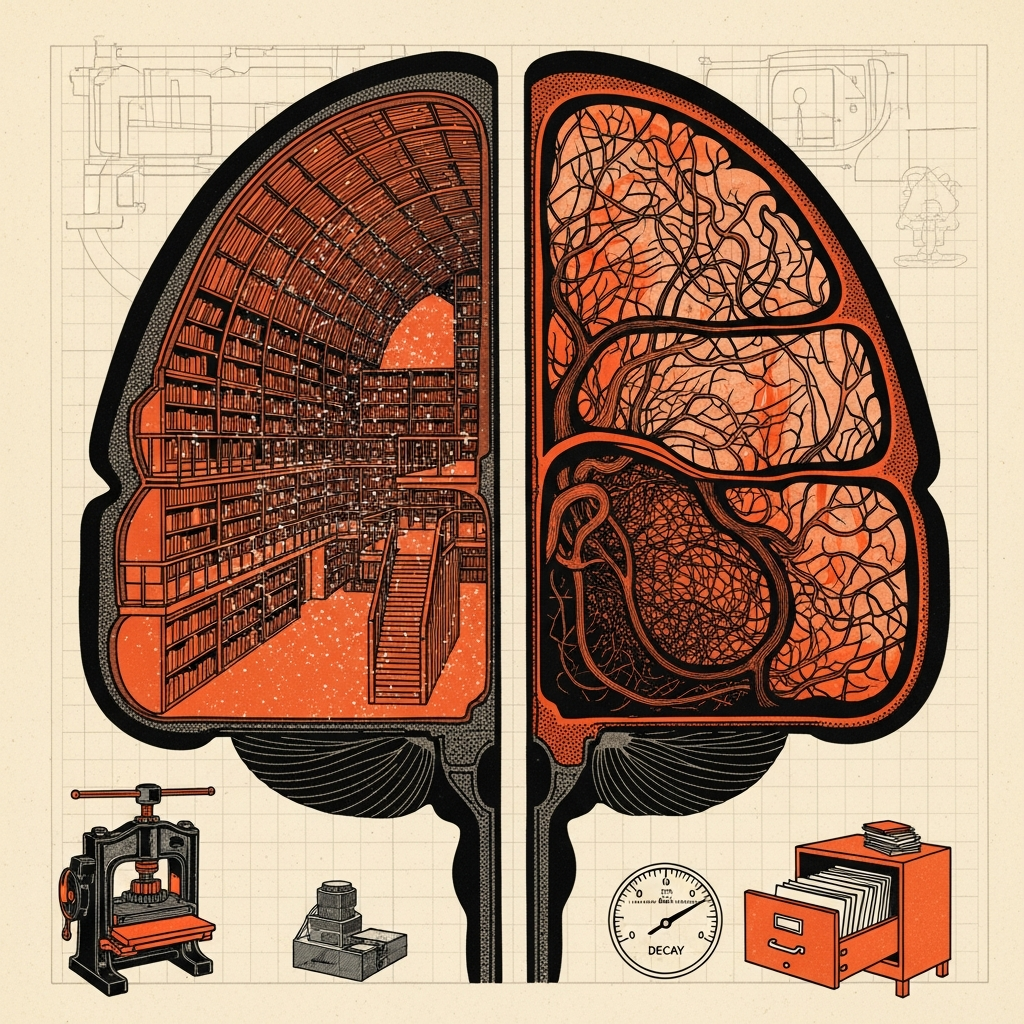

Think of your brain like a muscle. A muscle grows when you stress it. Easy lifts feel great but build nothing. The same principle governs memory. Struggling to understand something — wrestling with a concept, forcing your mind to connect new information to old — creates stronger neural pathways than passively absorbing polished prose.

Decades of research on what scientists call the "testing effect" confirms this. Attempting to retrieve information from memory strengthens it far more than simply rereading. The struggle is the point. The friction is the feature.

Now enter AI-generated study material. It is, almost by design, frictionless. Clear. Organized. Comprehensive. It removes every rough edge that would have forced a student's brain to engage. And when students know it came from an AI, something additional happens: they discount it before they even start reading.

Their internal monologue shifts. This wasn't written for me by someone who thought carefully about what I need. A machine generated this in seconds. How important can it be?

Cognitive scientists call this "source credibility effects." Your brain doesn't process all information equally. It runs a rapid background check on every source. Human expert? High credibility. Elevated attention. Deeper processing. Algorithm? Lower credibility. Shallow processing. Less retention.

The problem isn't the AI. The problem is the label.

And here is where it gets genuinely uncomfortable for educators. Transparency — telling students the truth about where their material came from — is an ethical obligation. Schools committed to honesty cannot hide the AI's role. But if source credibility effects hold, that transparency may reduce learning outcomes. Honesty and effectiveness can end up pulling in opposite directions. Call it the disclosure tension: not an established scientific term, but a real design problem that researchers and educators are only beginning to grapple with.

Who benefits from this tension? EdTech companies, for one. They can sell AI tutoring tools, watch adoption numbers climb, and point to student satisfaction scores. Students like AI-generated content. It feels helpful. The damage shows up later, on exams, on retention tests, in the gap between what students think they learned and what they actually remember.

Who loses? Students. Specifically, students who don't have access to human tutors, enriched classrooms, or teachers with small enough class sizes to provide genuine individual attention. The students AI was supposed to help most.

Who made the call? Not a single villain in a boardroom. This one emerged from a collision of well-intentioned decisions. Researchers pushed for AI transparency. Ethicists demanded disclosure. Developers optimized for user satisfaction rather than learning outcomes. Administrators adopted tools based on cost and convenience. No single person designed this trap. But the trap is real.

There's a parallel here worth understanding. In the 1990s, a wave of "learning styles" research convinced educators that students learned better when material matched their preferred style — visual, auditory, kinesthetic. Schools redesigned curricula around this idea. Teachers spent years tailoring lessons. Subsequent research found no reliable empirical support for the core claim — that matching instruction to a student's preferred style actually improves outcomes. The styles as personal preferences were never disproven; what collapsed was the idea that teaching to them made a measurable difference. But the belief persisted because it felt true. Students reported preferring material in their "style." Satisfaction scores went up. Learning outcomes did not reliably improve.

The AI disclosure tension risks becoming the same story. Students feel helped. Administrators see adoption. Researchers measure the gap nobody wants to talk about.

The mechanism underneath is straightforward enough to explain to anyone. When your brain believes information came from a credible, effortful human source, it treats that information as worth storing. It allocates attention. It cross-references. It builds connections. When your brain believes information was generated cheaply and instantly, it files it in the mental equivalent of a temporary folder. Easy come, easy go.

This isn't irrational. It's a heuristic that evolved over millennia of social learning. Humans learned from other humans who had skin in the game — hunters who survived, elders who remembered, teachers who cared. The credibility of the source was a reliable proxy for the value of the information. AI breaks that proxy without replacing it.

Why It Matters

The stakes here are not abstract. Hundreds of millions of dollars are flowing into AI-powered educational tools right now. School districts are signing contracts. Governments are funding pilots. The pitch is equity: give every student access to a personalized, infinitely patient tutor.

But if the disclosure tension holds — if students' awareness of AI authorship measurably reduces how deeply they engage with what they learn — then we risk building a system that is most harmful to the students it was designed to serve. Wealthy students with human tutors will keep learning the hard way, with friction, with struggle, with the desirable difficulty that builds lasting knowledge. Students relying on AI tools will get smooth, satisfying, forgettable content.

The solution is not to hide the AI. That road leads somewhere worse.

The solution is to understand what the brain actually needs — and design AI tools that reintroduce productive friction rather than eliminate it. Tools that ask students questions instead of answering them. Tools that force retrieval instead of enabling passive reading. Tools that use AI's power not to generate polished summaries, but to generate the right struggle at the right moment.

That product is harder to build. It's less satisfying to use. Students will rate it lower on feedback forms.

It will also actually teach them something.

The decision that matters now isn't whether to use AI in classrooms. That ship has sailed. The decision is whether we optimize for how learning feels or how learning works. Someone in an EdTech company, a curriculum office, or a school board will make that call in the next eighteen months.

Watch who they are. Watch what they choose. Follow the money to the outcome data they don't publish.

Follow The Breadcrumbs: Read Make It Stick: The Science of Successful Learning by Peter C. Brown, Henry L. Roediger III, and Mark A. McDaniel. It's the dossier on everything cognitive science knows about how memory actually works — and why the most effective learning almost always feels harder than it should.

Students processing identical study material may retain less of it simply because they believe an AI wrote it — meaning the most dangerous thing about AI in education right now isn't the content it produces, it's the credibility penalty the label carries.

References

- Bjork, E. L. & Bjork, R. A. (2011). Making things hard on yourself,